Aggressive yet sane persistent SSH with systemd and autossh

Not too long ago, I was contracted to assist with a K8 deployment. The developers' approach to persistent SSH tunnels left something to be desired.

Autossh is a great tool for persistent SSH connections; I use it mostly for reverse port and Unix socket forwarding. No punching holes in firewalls, no exposing services to the Internet. I love it.

Folksy guides like these suggest restarting autossh immediately on failure, ignoring TCP's connection teardown state entirely. That gap is exactly what this post addresses. Systemd is a better wrapper: autossh handles the connection lifecycle, systemd handles startup ordering, restart timing, and environment variables. They compose well. One caveat worth stating upfront: SSH tunnels carry TCP inside TCP, which is fine for low-volume use (a socket forward, a management port, a small database connection) but will hit congestion collapse under high load and packet loss. It is why production VPNs use UDP. For the low-volume forwarding this post covers, it is not a concern.

TCP CONNECTION TERMINATION

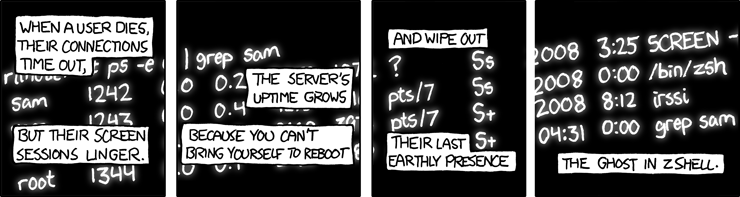

The crux of this story..

When a TCP connection closes, one side initiates by sending a FIN; this side becomes the active closer. What follows is a 4-way teardown: the active closer sends FIN, gets back an ACK, gets the other side's FIN, then sends the final ACK and immediately drops into the TIME-WAIT state. The other side (the passive closer) moves to CLOSED once that final ACK arrives.

TIME-WAIT is not a bug or an oversight; it exists for two good reasons. First, it prevents delayed in-flight segments from the old connection being misread as data in a new connection that reuses the same port quadruplet (source IP, source port, destination IP, destination port). Second, it gives the passive closer a chance to recover if the final ACK is lost: the passive closer will stay stuck in LAST_ACK and retransmit its FIN, and the active closer (still in TIME-WAIT) is there to respond to it. Both purposes require the state to last at least 2×MSL (twice the Maximum Segment Lifetime).

On Linux, this duration is hardcoded and cannot be tuned:

#define TCP_TIMEWAIT_LEN (60*HZ) /* how long to wait to destroy TIME-WAIT

* state, about 60 seconds */

|

There have been proposals to make this value tunable but they have been refused. A fixed TIME-WAIT duration is considered correct and necessary behaviour for internetworks.

At this point many people check tcp_fin_timeout and think they have found the knob:

$ cat /proc/sys/net/ipv4/tcp_fin_timeout 60

They are wrong. Despite the coincidentally identical default of 60 seconds, tcp_fin_timeout controls how long a socket lingers in FIN_WAIT2, an entirely different state that occurs earlier in the teardown, while the active closer is waiting for the passive closer's FIN. It has nothing to do with TIME-WAIT. Changing tcp_fin_timeout will not shorten your TIME-WAIT window. Do not confuse the two.

How this bites autossh specifically

Here is what goes wrong. Your SSH tunnel drops for any number of reasons: the remote reboots, a stateful firewall between you times out the idle flow, a link goes down. Autossh detects the SSH child dying and immediately forks a new one. But the old connection's port quadruplet is still sitting in TIME-WAIT on the active-closing side for up to 60 seconds. If autossh restarts SSH before that window expires, one of two things happens:

- The new

connect()gets backEADDRINUSEand fails outright, because the port tuple is still occupied. SSH exits, autossh tries again, same result. You are now in a tight restart loop fighting the kernel. - Or, worse still, the new connection succeeds on a different ephemeral port, but stale ACKs and RSTs from the old session are still in the network. These can confuse or reset the brand-new connection within its first few seconds of life.

The goal of this entire configuration is to make these scenarios either impossible or harmless. There are three levers:

- Detect connection death fast so SSH exits cleanly

- Configure autossh's restart behaviour so it does not hammer the kernel during a failure

- Tell systemd to wait long enough that TIME-WAIT has fully expired before the next connection attempt

Keepalives: detect drops and exit cleanly

The worst case is a silently dead connection where the TCP session is gone but neither end knows it yet, so SSH sits there doing nothing. This can happen across NAT devices, stateful firewalls, or any middlebox that times out idle flows. The fix is to run keepalives at both ends so a dead connection is detected and terminated within a predictable window rather than quietly lingering.

On the client side (in your autossh ExecStart), pass these SSH options:

-o "ServerAliveInterval 10" -o "ServerAliveCountMax 3"

Every 10 seconds of silence from the server, the client sends a keepalive probe. After 3 consecutive missed responses (30 seconds total), the SSH client exits. This gives autossh a clean process exit to react to rather than waiting for the OS to time out a dead TCP socket.

On the server side, add to sshd_config:

ClientAliveInterval 10 ClientAliveCountMax 3

The mirror image: the server probes unresponsive clients and drops them after 30 seconds. This is important for reverse tunnels. Without it, the server may hold the listening socket open long after the client has vanished, which blocks the tunnel being re-established on reconnect.

ExitOnForwardFailure yes is the other critical client option. Without it, SSH will happily connect to the server but silently fail to set up the port or socket forward, then sit there looking healthy while delivering nothing. With it, SSH exits immediately if the forward cannot be established, giving autossh a clean signal to restart.

autossh: tune restart behaviour

AUTOSSH_GATETIME controls how long SSH must stay connected before autossh considers the session successfully established. The default is 30 seconds: if SSH fails within 30 seconds on the very first attempt, autossh bails out entirely rather than looping. This is sensible at a terminal but counterproductive at boot, when the remote host might simply not be ready yet. Set it to 0 for a boot-time service; autossh will then retry on every failure, including the first. The trade-off is that genuine configuration errors (wrong hostname, missing key) will also be retried forever rather than failing fast, so make sure SSH works manually before deploying.

AUTOSSH_POLL with -M 0 does not perform active connection probing, which requires a non-zero monitor port paired with an echo service. What it does affect is the ceiling on autossh's internal backoff. When SSH fails repeatedly in quick succession, autossh progressively sleeps longer between restart attempts. The default ceiling is 600 seconds (ten minutes). Setting AUTOSSH_POLL=30 caps that ceiling at 30 seconds, so a prolonged outage never leaves you waiting ten minutes for the next retry attempt.

systemd: respect the TIME-WAIT window

This is the piece most configurations get wrong. If systemd restarts autossh immediately after it exits, autossh will try to reconnect before the 60-second TIME-WAIT window clears, and you end up in the failure loop described above. The fix is simple:

Restart=always RestartSec=60

The 60-second delay matches the hardcoded TCP_TIMEWAIT_LEN. If autossh exits for any reason and systemd has to restart it from scratch, the restart is delayed long enough that the kernel has finished cleaning up the old connection state. Restart=always ensures systemd will restart regardless of exit code; graceful exits, crashes, and signal deaths all trigger a restart.

Here is the complete unit file, with all three levers assembled. The comments explain each decision so the file is self-documenting:

$ cat ~/.config/systemd/user/signald_autossh.service

[Unit]

Description=AutoSSH tunnel to remote signald Unix socket

After=network-online.target

# Note: user systemd instances do not see system-level targets like

# network-online.target by default, so this ordering hint is not

# guaranteed to work in a user unit. For reliable boot ordering,

# either run this as a system unit, or ensure the user session is

# started after the network via PAM/loginctl-linger configuration.

[Service]

# Set to 0 for boot-time use. The default (30s) causes autossh to

# exit immediately if the very first SSH attempt fails within 30

# seconds, which is unhelpful at boot when the remote may not be ready yet.

# With GATETIME=0, autossh retries on all failures including the

# first. Verify SSH works manually before enabling this service.

Environment="AUTOSSH_GATETIME=0"

# With -M 0, AUTOSSH_POLL does not perform active connection probing

# (that requires a non-zero monitor port). It does cap the maximum

# backoff sleep in autossh's internal restart loop; the default is

# 600s (10 minutes). 30s keeps the ceiling tight during outages.

Environment="AUTOSSH_POLL=30"

Environment="SSOCK=/var/run/signald/signald.sock"

ExecStart=/usr/bin/autossh -M 0 \

-o "ServerAliveInterval 10" \

-o "ServerAliveCountMax 3" \

-o "ExitOnForwardFailure yes" \

-o "StreamLocalBindUnlink yes" \

-L ${SSOCK}:${SSOCK} \

-N remote.server

# SIGHUP prods autossh out of any backoff sleep so it retries

# immediately. This is documented in the autossh README.

# Use SIGUSR1 instead to force-kill and restart the SSH child.

ExecReload=/usr/bin/kill -HUP $MAINPID

# systemd waits 60 seconds before restarting, matching Linux's

# hardcoded TCP_TIMEWAIT_LEN. This ensures the old connection's

# port state has fully expired before the next connection attempt.

Restart=always

RestartSec=60

# Sends SIGTERM to all processes in the cgroup on stop.

KillMode=control-group

[Install]

WantedBy=default.target

For TCP port forwarding instead of Unix socket forwarding, swap -L ${SSOCK}:${SSOCK} for -L 9090:localhost:9090 and drop StreamLocalBindUnlink, which only applies to socket files and removes any stale socket path before binding. Everything else stays the same.

To run multiple tunnels in parallel, the gist above shows how to template this unit for multiple instances with a single shared configuration.

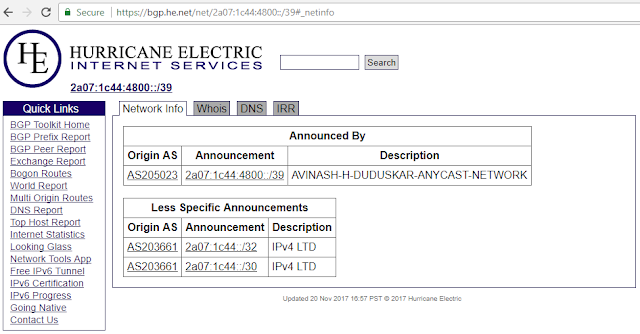

Vincent Bernat's write-up is the definitive reference for a deep dive into TIME-WAIT on Linux, covering tcp_tw_reuse, NAT interactions, and why tcp_tw_recycle was removed in kernel 4.12: https://vincent.bernat.ch/en/blog/2014-tcp-time-wait-state-linux

If you wish to sidestep the TCP-over-TCP problem entirely, Rapid SSH Proxy takes a different approach: it uses the SSH protocol for authentication and encryption but carries the tunnel payload over UDP rather than a nested TCP session. That avoids the congestion collapse problem entirely. The trade-off is that you need the RSP daemon running on both ends.

Comments

Post a Comment